What Is The Difference Between Sound And Audio?

When dealing with microphones and other audio/sound equipment, the terms “sound” and “audio” are commonplace. Knowing the difference between these two terms will help to understand professionals in the field while also allowing you to communicate more effectively when using microphones and other sound/audio equipment!

What is the difference between sound and audio? The key difference between sound and audio is their form of energy. Sound is mechanical wave energy (longitudinal sound waves) that propagate through a medium causing variations in pressure within the medium. Audio is made of electrical energy (analog or digital signals) that represent sound electrically.

This quick answer covers the fundamental difference between sound and audio but there is more to know than that. In this article, we'll discuss further differences between sound and audio along with how microphones act with these two types of energies.

If you'd like to support my work and learn more about music production, please consider subscribing to my Substack.

Table Of Contents

- What Is Sound?

- What Is Audio?

- How Are Sound And Audio Measured And Represented?

- The Role Of The Microphone With Sound And Audio

- Related Questions

What Is Sound?

Sound is defined as a vibration that typically propagates as an audible longitudinal wave of pressure through a transmission medium such as a gas, liquid or solid.

Energetically, sound is mechanical wave energy. A sound wave, then, is an oscillation of matter that transfers energy through a medium (gas, liquid, or solid).

These waves have the potential to travel long distances, carrying energy along the way.

Though the waves may travel far, the movement of the medium (which must posses elasticity and inertia) is very limited. The medium material (particles) does not oscillate far from equilibrium.

Sound is most easily understood as what travels from a sound source (voice, musical instrument, loudspeaker, headphones, etc.) through the air and reaches our ears in order for us to hear it.

If we really get down to it, our sense of hearing is more so based on audio than sound itself. Our brain receives electrical signals that are produced in the ear that replicate the sound waves in our ears.

Sound waves cause tiny variations in pressure and displacement within their medium in peaks and troughs. For the particles of the medium, a sound waves applies maximum rarefaction and compression in cycles.

The frequency of these cycles is measured in Hertz (cycles/second). Sound waves are generally made of many overlapping frequencies that, when sounded together, yield the character of the sound itself.

- Audible sound, as we hear it, is within the frequency range of 20 Hz and 20,000 Hz.

- Infrasound is inaudible sound below 20 Hz.

- Ultrasound is inaudible sound above 20,000 Hz.

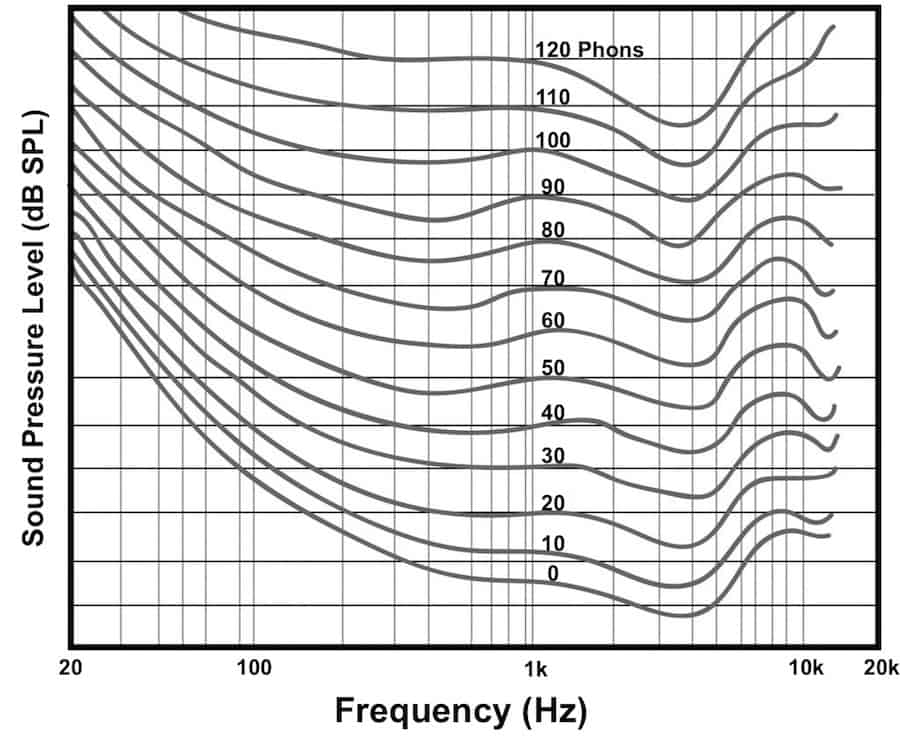

As humans, we are generally born with ability to hear across this entire range, though as we age and damage our hearing, this range shortens (particularly in the high-end). On top of this, our ears/brains are not equally sensitive to all frequencies in the spectrum. The Fletcher-Munson curves are a great resource to relate our hearing sensitivity across the audible spectrum of sound.

Sound travels through different mediums at different speeds. Let's look at a few common examples here:

- Air @ 20°C (68°F): 343 m/s (1125 ft/s)

- Air @ 0°C (32°F): 341 m/s (1119 ft/s)

- Water: 1482 m/s (4862 ft/s)

- Steel: 5960 m/s (19554 ft/s)

What Is Audio?

Audio is most simply described as electrical energy (active or potential) that represents sound.

Audio frequencies, like sound, are in the audible range (for humans) of 20 Hz – 20,000 Hz. Unlike sound, there are no “infra” or “ultra” audio frequencies.

Audio can be further differentiated into two categories: analog and digital.

What Is Analog Audio?

Analog audio represents sound as an electric AC voltage (whether active or potential).

As mentioned, sound waves cause oscillating variations in pressure and displacement within their medium in peaks and troughs. For the particles of the medium, a sound waves applies maximum rarefaction and compression in cycles (measured in Hertz or cycles/second).

Analog audio represents sound with an AC voltage that coincides with the sound. In a perfect conversion between sound and audio or vice versa, these AC voltages would have the same frequencies and relative amplitudes as the sound waves themselves.

The “converters” are known as transducers and include microphones (sound into audio), and loudspeakers and headphones (audio into sound).

Like I said above, these analog audio electric AC voltages can be either active or potential.

What I mean by this is that analog audio can be travelling within analog audio equipment being processed and played back. It can also be recorded by analog means (on tape, vinyl, etc.) in order to be stored for later playback. When a vinyl or tape is played back, AC voltages (audio signals) are ran through a circuitry system to a playback transducer.

Magnetic tape and mechanical vinyl tend to wear with time and so analog equipment must be well taken care of. Each time analog audio is reproduced on vinyl and tape, some quality is lost.

Note that analog synthesizers also create analog audio. Analog audio does not always have to be created initially from sound by a transducer like a microphone.

What Is Digital Audio?

Digital audio represents sound as a series of binary numbers.

I like to think about digital audio as being a digital representation of analog audio.

Basically digital audio represents sound in the same waveform-based way that analog does. The big difference is that digital is discreet rather than continuous. Digital audio waveforms are represented by tiny samples of varying amplitudes that are stacked one after another to build a representation of an audio signal.

Digital audio can be stored on hard drives (including CDs), servers, or anywhere else a digital file may be kept.

Common digital audio file formats include:

- .aiff: A standard uncompressed CD-quality, audio file format used by Apple.

- .flac: A file format for the Free Lossless Audio Codec, an open-source lossless compression codec.

- .mp3: Open source lossy audio codec, specifically optimized for transparent compression of stereo audio at bitrates of 160–180 kbit/s.

- .wav: Standard audio file container format used mainly in Windows PCs. Commonly used for storing uncompressed (PCM), CD-quality sound files.

Unlike analog, digital audio signals can be reproduced over and over again with no loss of quality.

Note that in order to listen to digital audio, the audio must first be converted into analog in order to drive the speakers or headphones which are inherently analog. This is done with a digital-to-analog converter.

Similarly, in order to record digital audio with a microphone, we must convert the mic's inherently analog audio signal into digital signals. This is done with an analog-to-digital converter. Some mics (like USB mics) have these ADCs built into their designs while most other mics will require a separate ADC (like a digital mixer or an audio interface).

I mentioned the speed of sound earlier. Do audio signals travel at a certain speed as well?

Let's begin answering this question by restating that audio is an electrical representation of sound. Electricity of any type has the potential to travel at the speed of light (299 792 458 m/s in a vacuum).

That being said, electrical audio signals travel much slower due to the medium they travel through. Audio cables have much more friction than vacuums. So although mic signals, like all electrical signals, have the potential to travel at the speed of light, in practice they never reach this speed.

Note that wirelessly transmitted audio signals travel via radio frequencies through the air at the same speed light does.

For more information on wirelessly transmitted audio and wireless mics, check out my article How Do Wireless Microphones Work?

How Are Sound And Audio Measured And Represented?

Measuring is essential if we are to effectively use sound and audio to create art or any type of audio product.

There are many ways in which we measure and describe sound and many other ways we measure and describe audio audio. Though sound and audio are quite similar, there are only a few factors we measure that are common to both. When measuring sound and audio, we most often use different metrics.

Let's look at the similarities and differences in measurement.

Sound and audio are both measured in terms of level/amplitude and frequency.

Frequencies Of Sound And Audio

Frequencies in sound and audio represent the same thing and are measured the same way in the range of human hearing (20 Hz – 20,000 Hz). The amplitude of these frequencies, however, is measured differently. Measuring the levels/amplitudes of audio is different than with sound.

Audio is sometimes characterized by bandwidth, which represents the difference between the uppermost frequency and the lowest frequency of the audio signal.

Sound Level/Amplitude

The level/amplitude of sound is generally measured in:

- Sound pressure

- Decibels Sound Pressure Level (dB SPL)

Sound Pressure

Sound pressure is the localized pressure deviation from the ambient atmospheric pressure in a medium that is caused by a sound wave in that medium. The SI unit of measure for sound pressure is the Pascal, which is much more commonly used that the imperial PSI (pounds per square inch).

1 Pascal is equal to 1 Neuton per square meter.

Standard atmospheric pressure at sea level is given as 101,325 Pa. The deviation cause by sound must be smaller than this (or else a sonic boom would happen) and is often much, much smaller than this value.

- The threshold of hearing (in Pascals) is 2.00×10−5 Pa at the ear.

- Normal conversation sound waves are roughly 0.002 to 0.02 Pa (at 1 meter from the ear).

- The threshold of pain, where sound pressure will cause pain and injury, is 200 Pa at the ear.

Sound pressure is a linear value.

Decibels Sound Pressure Level (dB SPL)

dB SPL is defined by the following equation:

dB SPL = 20 log10 (P1/P0)

P1 is the measured sound pressure level of the sound

p0 is a reference value of 20μPa, which corresponds to the low threshold of human hearing (in healthy ears).

94 dB SPL is equal to 1 Pascal of sound pressure.

- The threshold of hearing (in dB SPL) is 0 dB SPL at the ear.

- Normal conversation sound waves are roughly 40 to 60 dB SPL (at 1 meter from the ear).

- The threshold of pain, where sound pressure will cause pain and injury, is 140 dB SPL at the ear.

Unlike sound pressure, which is a linear measurement, decibels sound pressure level is logarithmic.

I'll mention the inverse-square law and inverse-distance law here to cap off our explanation of sound amplitude.

- Inverse-square law of sound: the intensity of sound is inversely proportional to the square of the distance from the sound source.

- Inverse-distance law of sound pressure: the sound pressure of a sound wave is inversely proportional to the distance the sound wave has travelled from the sound source.

Sound pressure radiating from a point source is halved for each doubling of distance from the point source (this is a decrease of 6.02 dB SPL).

The pressure ratio is not governed by the inverse-square law, but is still inversely proportional to the distance the sound waves travels. It is governed by the inverse-distance law.

Audio Level/Amplitude

The level/amplitude of audio is generally measured in:

- Voltage RMS in millivolts (mV)

- Decibels volts (dBV)

- Decibels unloaded (dBu)

- Decibels full scale (dBFS)

Voltage RMS (mV or V)

The AC voltages that make up audio signals are typically measured in millivolts or volts RMS.

RMS stands for root mean square. With AC electrical signals, it represents the value of a direct current that would produce the same average power dissipation in a resistive load.

Voltage RMS, then, gives us a good idea of the effective strength of the signal rather than the amplitude of the peaks and troughs of the AC signal. Remember that the actual voltage of an AC signal is 0 at certain points of its cycle, so RMS is useful for understanding the effective voltage of the signal.

Note that the average value of a single frequency AC voltage (with a fixed amplitude) would be 0 volts regardless of the amplitude. The signal, over a complete wavelength, would exhibit just as much positive voltage as it would negative voltage.

Decibels Volts (dBV)

dBV are decibels relative to 1 volt, regardless of impedance.

\text{dBV} = 20 \log (\frac{V_2}{V_1})V1 is the reference voltage of 1 V

V2 is the signal's RMS voltage (in volts)

This is typically used to measure the output RMS voltage of microphones. It is also used to denote consumer line level audio (-10 dBV).

Decibels Unloaded (dBu)

dBu are decibels relative to √0.6 V ≃ 0.775 volt, regardless of impedance, though based upon a signal impedance of 600 Ω load dissipating 0 dBm (1 mW).

The reference voltage comes from V = √600 Ω • 0.001 W

\text{dBu} = 20 \log_{10} (\frac{V_2}{V_1})V1 is the reference voltage of √0.6 V ≃ 0.775 volt

V2 is the signal's RMS voltage (in volts)

dBu is generally used in professional audio equipment where professional line level is set to +4 dBu and equipment is calibrated to show 0 on VU meters when a +4 dBu signal is applied.

Decibels Full Scale (dBFS)

dBFS are decibels full scale and relate to the digital ceiling of 0 dBFS where clipping will occur to digital audio.

Digital audio outputs should remain below 0 dBFS to avoid undesirable digital distortion (unless this effect is wanted).

Common Audio Signal Levels

Audio signals are often defined within one of the following levels:

Mic Level

Mic level is the typical and expected level of professional microphone outputs and mic inputs.

Mic level is generally between 1 to 10 millivolts or -60 to -40 dBV. These microphone signals require amplification (via microphone preamplifiers) to reach line level (+4 dBu) for use in mixing consoles and DAWs.

Line Level

Line level, as discussed before, actually has two levels:

- Professional line level: +4 dBu

- Consumer line level: -10 dBV

Line level is a specified analog audio signal strength for use in most audio devices, whether professional or consumer-grade.

Mic level signals must be amplified to line level and line signals must be amplified to speaker level for loudspeakers/headphones.

Instrument Level

Instrument level is broad term for the signal level from electric instrument outputs or pickups inside or outside the instruments. Instrument levels range widely from a few millivolts AC to a few volts AC.

DI boxes are often put in-line to convert instrument level signals to mic or line level signals for use with other audio equipment.

Speaker Level

In the audio world, there are four signal levels that we deal with: mic, instrument, line, and speaker. These levels all have different meanings, so it is important to know the differences between them. Take a look below to learn about these different signal levels.

Microphones output mic level audio signals. To learn more about microphones and their audio signals, check out the following MNM articles:

• What Is A Microphone Audio Signal, Electrically Speaking?

• Do Microphones Output Mic, Line, Or Instrument Level Signals?

Common Sound Measurements

Timbre

Timbre is a somewhat subjective term to describe the characteristics of a sound that distinguishes it from another sound with the same pitch and loudness.

For example, an acoustic guitar string ringing at A4 = 440 Hz will sound different than a piano string ringing at A4 = 440 Hz.

The main factors of timbre include the harmonic content of a sound and the dynamic characteristics of the sound like vibrato and the attack-decay envelope.

Loudness (Perceived)

Perceived loudness is a psycho-acoustic quantity. The factors of loudness include:

- Sound pressure level

- Frequency

- Time and attack-delay envelope

- Health of the subject's hearing

Perceived loudness is incredibly complex and difficult to measure, though the phon (40 dB SPL at 1 kHz) is a commonly used unit.

However, sounds do not only happen at a single frequency and do not typically produce consistent sound pressure levels over significant amounts of time.

Field (Free Or Diffuse)

Sound is sometimes measured by sound fields that help to describe the spatial positions of the sound source and the sound observer (listener, microphone, etc.).

When sound radiates from a sound sources, it may travel directly to the observer in a straight line or it may reach the observer indirectly after being reflected.

Our ears and our microphones typically experience both direct and indirect sound waves from the source.

Free field represents an acoustic field where only direct sound is present while diffuse field represents an acoustic field where only reflected sound is present.

Though it's extremely difficult to actually measure the field of a given sound, the combination of free and diffuse fields can be subjectively heard as dry and/or wet; as being reverberant; or as having a delay or echo.

Directionality

Though sound, in general, radiates from its sound source in all directions, it can be considered quite directional, especially at higher frequencies.

The most obvious example of this is human speech. Human speech is particularly present in and around 4 kHz, which is a fairly directional frequency range.

So, with human speech, there is a significant different when someone speaks with us face-to-face versus when someone speaks facing away from us. The directionality is a factor in sound, though it is impractical to truly measure with precision.

Pitch

Pitch is a perceptual musical property that tells us if a note is higher or lower than another and is largely based on the fundamental frequency of the note.

Pitch is an essential part of musical notation, melody, and harmony.

Taking from our earlier example, a note at A4 = 440 Hz on the acoustic guitar and a note at A4 = 440 Hz on the piano have the same pitch.

Duration

The duration of a sound is an important measurement.

One method of measuring the overall duration of the sound is to time the initial vibration of the sound to the return to equilibrium ambient noise (end of the sound).

However, the attack/decay envelope is also important to duration. This envelope helps to measure the change in the sound as it develops and dissipates in the medium.

Common Audio Measurements

- Distortion

- Signal-to-noise ratio

- Signal impedance

- Sample rate (digital)

- Bit-depth (digital)

- Balanced or unbalanced (analog signal)

Distortion

Distortion is any alteration to the original waveform of an audio signal. With audio, distortion typically happens when the signal overloads the electronics in which it passes through. This can be due to a very loud sound but is generally brought about my too much gain.

Analog audio distortion typically happens in the form of saturation. Saturation happens when analog audio equipment (mic and console circuitry, tubes, transistors, tape, amplifiers, etc.) is overloaded.

Saturation is a subtle form of distortion that adds pleasant-sounding harmonics to the audio signal. This type of distortion is cherished as part of what makes analog audio sound so warm and pleasant.

Digital audio distortion is not-so-cherished by listeners. Digital distortion, known as digital clipping, happens when our digital audio signal goes above 0 dBFS, at which point we run out of binary bits (1s and 0s) to represent the amplitude of the signal.

Audio signals above 0 dBFS are effectively flattened at their peaks and troughs. At the extreme, digital clipping will turn a sine wave into a square wave. This drastically alters the sound of the signal.

At more practical levels of digital distortion, the audio signal will suffer from harsh distortion and even digital artifacts. Therefore, unlike analog saturation, digital clipping is best to be avoided.

With microphones, distortion is typically measured in total harmonic distortion (THD).

THD is a measurement of the harmonic distortion in an audio signal as a percentage of cumulative overtones added to a fundamental frequency. This is most easily measurable with a single-frequency sine wave applied directly at the mic diaphragm.

1% THD is the typical threshold when measuring a mic’s maximum sound pressure level.

Signal-To-Noise Ratio

The signal-to-noise ratio of an audio signal, as the name suggests, is the ratio between the level of the signal that represents the intended sound and the part of the signal that represents noise.

Noise can be picked up in a microphone from ambient sound in the acoustic space and be present in an audio signal. It can also be introduced by electromagnetic interference from power mains or radio waves. Another common source of noise is the electronics (amps, tubes, transistors, transformers, etc.) of an audio circuit.

Signal-to-noise ratio is typically measured in decibels since decibels are inherently a ratio and are already used to describe signal levels.

SNR is sometimes included in a microphone's specifications sheet. This spec is calculated by taking the common measurement tone of 1 kHz at 94 dB SPL and subtracting the microphone's self-noise rating from this 94 dB.

To read about signal-to-noise ratio and microphones in greater detail, please check out my article What Is A Good Signal-To-Noise Ratio For A Microphone?

Signal Impedance

Electrical impedance is a critical value in analog audio equipment.

Electrical impedance is the measure of the opposition that a circuit presents to an alternating current when a voltage is applied. It can be thought of as “AC resistance” is that sense.

If the impedance is too high, an audio signal will not be able to travel through any significant length of cable or circuitry without severe degradation.

Transistors and tubes are often used in microphones as impedance converters in order to bring down the impedance of the audio signal. Step-down transformers have a similar effect on impedance as well.

For maximum voltage transfer from one piece of audio equipment to another, we need to “bridge” the inputs and outputs. This means we want the input impedance of an audio device to be much greater (typically at least 10x) than the output impedance of the source device that is sending audio to the input device.

This can be described in the formula below, where the source device is a microphone (outputting an audio signal) and input device is a mic preamplifier (receiving an audio signal):

V_{input} = \frac{Z_{input} V_{source}}{Z_{input} + Z_{source}}

V(input) = Voltage at the preamp input.

V(source) = Voltage at the microphone output.

Z(input) = Input impedance of preamp (microphone load impedance).

Z(source) = Microphone output impedance.

For more information on audio signal impedance, check out the following My New Microphone articles:

• Microphone Impedance: What Is It And Why Is It Important?

• What Is A Good Microphone Output Impedance Rating?

Sample Rate (Digital)

Digital audio is essentially a representation of analog audio which is a representation of sound.

Analog audio waveforms are continuous by nature. Digital audio signals, on the other hand are discreet. Essentially, digital audio takes very fast “snapshots” of an audio signal and puts them one after another to create a coherent representation of sound.

To our ears, digital audio does an excellent job at representing sound. This is because our ears cannot distinguish between these “snapshots” of sound so the digital audio playback sounds normal to us.

These “snapshots” are known as samples.

The sample rate of a digital signal is the number of samples in a second of audio.

Common sample rates include 44.1 kHz (44100 samples/second) and 48 kHz (48000 samples per second). There are other samples rates like 88.2 and 96 kHz that are gaining popularity but 44.1 and 48 are most common. 44.1 is typically used for music while 48 is typically used for video.

As we can imagine, with that many samples of audio per second, our ears are incapable of hearing the jump points between samples.

Bit-Depth (Digital)

For each sample above, the digital waveform needs to have a specific amplitude.

The bit-depth represents the number of possible amplitudes any given sample can have in a digital audio signal. Each bit in the string of bits can be either a 1 or a 0. For each increase in bit-depth, the number of possible amplitudes is doubled.

Here are a few common audio bit-depths along with the number of possible amplitudes and their overall dynamic range:

| Bit-Depth | Possible Amplitudes | Dynamic Range |

|---|---|---|

| 8-bit | 256 | ~48 dB |

| 16-bit | 65,536 | ~96 dB |

| 24-bit | 16,777,216 | ~144 dB |

| 32-bit float | 4,294,967,296 | ~196 dB |

Balanced Or Unbalanced (Analog Signal)

Audio signals are often defined as being balanced or unbalanced. This is particularly true in audio cables and therefore applies to audio inputs and outputs.

Unbalanced cables carry audio on one send wire and one return/shield wire. This systems is vulnerable to electromagnetic interference and cannot carry audio for significant lengths without degrading the signal.

Balanced cables carry the audio signal on two wires: one wire carries the signal in positive polarity while the other carries the signal in negative polarity. A third wire acts are a shield.

At balanced inputs, these two audios signals are combined together by a differential amplifier which essentially adds the opposite polarity audio while rejecting any noise that was commonly picked up by both wires along the cable's length.

For a deeper look into microphones and the differences between balanced and unbalanced audio signals, check out my article Do Microphones Output Balanced Or Unbalanced Audio?

The Role Of The Microphone With Sound And Audio

Microphones act as transducers that convert sound waves (mechanical wave energy) into audio signal (electrical energy).

The key microphone component in energy conversion is the diaphragm. A diaphragm moves in reaction to sound pressure and the microphone is designed to produce an electrical signal based on the movement of the diaphragm.

For a deeper understanding of microphone diaphragms and how they work, consider reading the following My New Microphone articles:

• What Is A Microphone Diaphragm?

• What Are Microphone Diaphragms Made Of? (All Diaphragm Types)

Typically this conversion is possible via electromagnetic induction (dynamic microphones) or via electrostatic principle (condenser microphones).

To learn more about the dynamic and condenser microphone transducer types, check out my article Microphone Types: The 2 Primary Transducer Types + 5 Subtypes.

So by subjecting a microphone to sound at its diaphragm, it will output an audio signal. This is the essential role of any given microphone.

For an in-depth read on how microphones convert sound to audio, please read the following My New Microphone articles:

• How Do Microphones Work? (A Helpful Illustrated Guide)

• What Do Microphones Measure And How Do They Measure?

Speakers, which are essentially the exact opposite of microphones, receive analog audio signals and convert them to sound. Loudspeaker transducers generally work on the principle of electromagnetic induction just like our dynamic microphones.

Again like a microphone, a loudspeaker uses a diaphragm. However, this diaphragm is much wider and thicker, and is capable of handling much higher voltages. A speaker level audio signal is sent to the speaker, causing the diaphragm to push and pull, which, in turn, produces sound waves that coincide with the audio signal.

Because speakers are essentially microphones in reverse, it's possible to wire them backwards and turn a mic into a speaker and vice versa.

To learn more about microphones and speakers and how they deal with audio and sound, check out the following My New Microphone articles:

• How To Turn A Loudspeaker Into A Microphone In 2 Easy Steps

• Do Microphones Need Loudspeakers Or Headphones To Work?

• How To Plug A Microphone Into A Speaker

Related Questions

Is an audio engineer the same as a music producer? Audio engineers focus on capturing, recording, mixing, and playing back audio while music producers are involved with the creative process of adding production value to the music. These two roles are typically separated, though in budget situations, one person could fill both roles.

What is the difference between pitch and frequency? Frequency refers to cycles/oscillations per second. Sound waves and audio signals will have a variety of frequencies between 20 Hz – 20 kHz. Pitch is a perceptual musical property that tells us if a note is higher or lower than another and is largely based on the fundamental frequency of the note.