Complete Guide To Audio Modulation Effects (With Examples)

Modulation effects (and modulation in general) are powerful tools when it comes to processing audio. There are plenty of distinct effects that fall into the great category of modulation that we should understand and have at our disposal when mixing audio or sculpting the perfect tone in our instrument.

What are audio modulation effects? Audio modulation effects manipulate the input audio over time via the control of a carrier signal. The input audio is referred to as the modulator signal, which technically controls the carrier signal, which is generally produced via an oscillator generator or signal detector.

This quick definition is broad at best. In this article, we'll get into the details of how modulation works. We'll also dive into the different audio effects that utilize modulation, from flangers and phasers to ring modulators and vocoders) to develop our understanding of this awesome effect category.

If you'd like to support my work and learn more about music production, please consider subscribing to my Substack.

Table Of Contents

- What Is Modulation?

- The Modulator

- The Carrier

- Standard Modulation Effects

- Other Effects That Use Modulation

- Other Uses Of Modulation In Audio

Jump ahead to the section titled Standard Modulation Effects to skip the discussion on modulation, modulator signals and carrier signals and get straight to the effects.

What Is Modulation?

What is modulation? Modulation, in regards to audio, is not an effect by itself. Rather, it's a process in which one signal (the modulator) controls one or more parameters of another signal (the carrier). Modulation is used by several audio effects known collectively as “modulation effects”.

Some “modulation effects” will often show up in other categories (instruments, spectral effects, etc.) but are technically modulation effects (in addition to being parts of other categories). In this article, we'll touch on them all.

So, with a modulation effect, we have a modulator signal that will modulate a carrier signal. When it comes to audio effects, the modulator will nearly always be the input audio signal. The carrier, then, will be one of the following:

- Oscillator generated by the effect

- Input-generated signal (envelope or octave)

- Separate input signal

- Expression controller

- Sequencer

For the typical modulation effects (chorus, flanger, phaser, tremolo and vibrato), the carrier is an LFO (low-frequency oscillator). However, to truly understand modulation, we will look at all the other carrier types.

Before we get into our discussion on the modulator and carrier signals, I'll reiterate the term modulation: Modulation simply means that one signal is being controlled by another signal. The factors that can be controlled are plentiful and different effects will have different modulated parameters.

The Modulator

What is the modulator signal? The modulator signal is the signal in a modulation effect that will modulate (manipulate one or more parameters) the carrier signal).

The modulator signal is simply the input signal. This can be any audio signal that is processed by the modulation effect.

Oftentimes the modulator will be an instrument signal through an effects unit or vocal. Of course, it can be any audio signal that the modulation effect can effectively process.

Note that modulation effects can be designed to accept instrument, mic and/or line level signals. They can also be software effects that process audio within a computer or digital audio workstation. When we discuss the types of modulation effects, I'll be sure to offer examples of the various effect unit formats.

The Carrier

What is the carrier signal? The carrier signal is the signal in a modulation effect that will be modulated (have one or more of its parameters manipulated) by the modulator signal.

As we've discussed, the carrier is the signal that will be modulated and is generally either created by or detected by the effects unit itself.

The terminology can be confusing here, as we generally imagine a modulation effect as “modulating” the signal we input into it. However, technically speaking, the input signal is the “modulator”.

Of course, this is only the terminology. When actually using these effects, it's more about the sound than the names. That being said, it's important that, for this article, we get the housekeeping out of the way.

Okay, so we have a few different types of carrier signals to discuss:

Oscillator

What is an oscillator? An oscillator is a periodic, oscillating signal that moves between a minimum and maximum value. Oscillators often have a sine or square waveform but can have any repeating waveform. Oscillators will also have a fundamental frequency and, potentially, a harmonic profile.

Let's have a look at a graphic representation of the 4 most basic oscillator waveforms (notice that each waveform repeats twice in the following graphic and that the waveforms are named after their appearance):

- Sine wave: green

- Square wave: blue

- Triangle wave: red

- Sawtooth wave: orange

In the majority of the modulation effects that utilize oscillators, the carrier will be a sine wave, though that's not always the case. Some units will offer alternate waveforms. For the rest of the article, let's assume that the oscillator is a sine wave unless otherwise noted.

An oscillator is generally defined by its amplitude (whether that's in volts or decibels of some sort) and its frequency (cycles/second). Have a look at the following photo to get an idea of the basics of a sine wave:

We can see that a modulation effect can modulate a sound parameter up and down in a repeated pattern with an oscillator. This parameter can be:

- Amplitude of the input signal (tremolo and ring modulation)

- Delay time of a delay circuit (vibrato, chorus and flanger)

- Corner frequency of cascading all-pass filters (phaser)

- Pan position of the signal (auto-pan)

We'll get to these effects shortly (click the links to jump ahead to any of the mentioned effects.

The oscillator(s) in these effects units will typically be produced by the effects units themselves. In some cases, the oscillator may be inputted from another source if that's what is required.

The oscillator frequency (the rate at which a full period is completed) is also important when it comes to oscillator carrier signals. Frequency is measured in Hertz (Hz), which means cycles or periods per second. Note that the audible range of human hearing is in the frequency range of 20 Hz to 20,000 Hz.

When it comes to modulation effects, low-frequency oscillators (LFOs) are the most common carrier oscillators. LFOs are loosely defined as oscillators with frequencies below the audible threshold of 20 Hz, though they are often well below 20 Hz.

LFOs are used in tremolo, vibrato, chorus, flanger, phaser and auto-pan modulation effects.

In the audible frequency range, oscillators are used as audio sources in synthesizers but can also be used as carrier signals in the ring modulation effect. Oscillators within this range are also used in frequency modulation (FM) synthesis to modulate other oscillators, some of which may be outputted as audio.

Though not useful for audible modulation effects per se, it's important to mention carrier wave oscillators with very high or ultra-high frequencies. These carriers are used in AM (amplitude modulation) radio transmission, which is in the range of 550 to 1720 kHz, FM (frequency-modulation) radio transmission, which is in the range of 88 to 108 MHz, and other processes.

Envelope

What is an envelope? In regards to audio, an envelope is a description of how a sound or audio signal changes over time. Envelopes often refer to the amplitude of a signal/sound. Still, they may also refer to any other parameter that either naturally changes over time or is modulated by an envelope generator to change over time.

The “carrier” may be an envelope. This can certainly be the case with synthesizers, samplers and other electronic instruments that often rely on envelope generators to modulate various parameters of the synth's signal(s).

Envelope generators typically have four stages which also apply to natural envelopes. They are the ADSR stages (attack, decay, sustain, release):

- Attack: the time taken for the initial increase from zero to the peak of the envelope.

- Decay: the time taken for the subsequent decrease from the peak to the sustain level of the envelope.

- Sustain: the level during the main sequence while the envelope is engaged (generally as the key of the synthesizer is pressed).

- Release: the time taken for the level to decay from the sustain level to zero after the envelope is disengaged (the key is released).

Though envelope generators are used in synths to modulate all sorts of parameters, they're not overly common in modulation effects themselves. That being said, it's good to know about ADSR to grasp the idea of an envelope better.

An envelope can also be used in a modulation-type effect unit. This is the case with the auto-wah/envelope filter effect, which effectively detects the envelope of the input signal and uses that envelope information/signal to control a filter of some sort.

The input signal's amplitude can be detected and analyzed to produce an envelope that can then be used to control/modulate another parameter. As mentioned, the envelope filter effect uses this info to modulate a filter.

Secondary Input Signal

Some modulation-style units can take in two different signals and use one to modulate the other. This is the case when an oscillator generator is plugged into a unit. It's also the case with the vocoder. Perhaps a vocoder isn't technically a “modulation effect,” but rather it's its own instrument. That being said, it's an awesome use of modulation in audio and source. More on vocoders later.

The point here is that any signal can modulate any other signal. Of course, some signals make for better modulators, and others make for better carriers. It's also true that not all parameters can be modulated to achieve a practical effect.

That being said, carrier waves could be more than just oscillators or envelopes. They could be their very own audio signals.

Expression Controller

What is an expression controller? In the context of audio signals, an expression controller is any control that can modulate the parameters of an effect or instrument.

This is where things get tricky. Is a volume fader a modulation effect? What about a gain knob? Both these controls can expressively alter the amplitude of a signal, so where do we draw the line?

Well, the truth is that expression controllers, whether they're expression pedals, modulation wheels, faders or otherwise, can certainly be linked to modulate certain parameters, but that doesn't necessarily make any particular link a “modulation effect”.

More on this in the upcoming section Standard Modulation Effects.

One expression effect worth mentioning is wah-wah, which uses an expression controller (typically an expression pedal) to achieve spectral glide in a signal's frequency response, thereby modulating the EQ/filters of the signal to cause a distinctive “wah-wah” sound. Some people, particularly in the world of guitar, would consider wah-wah, then, to be a

modulation effect”.

I'll discuss modulation controllers in more detail at the end of this article.

Standard Modulation Effects

So far, we've discovered what modulation is and the various ways it shows up in audio. We know by now that not all modulation is to be considered a “modulation effect”.

There are countless ways in which modulation can be used practically, but only a few dedicated modulation effects stand out as distinct audio effects. Most modulation, whether in a synthesizer, sampler, digital audio workstation or otherwise, is awesome but not necessarily an “effect”.

The main distinction, I suppose, between general modulation and standard modulation effect is that the effect will have its own carrier signal and will act upon an audio signal at the input.

By that definition, all standard modulation effects will have the oscillator-type carrier signal. This is the case if you even look at your DAW's modulation effects category; browse “modulation effects units” at your local or online shop (hardware and software), or read any other articles on the subject.

So then, the definitive list of modulation effects would be:

I'll discuss the other common effects that utilize modulation in the section titled Other Effects That Use Modulation. I'll also touch on other uses of modulation in audio in the section Other Uses Of Modulation In Audio. However, before that, let's get to the main part of this article and discuss the various modulation effects.

Tremolo

What is tremolo? Tremolo is defined by a fast variation in amplitude. Tremolo is similar to vibrato, except that it acts on amplitude/level rather than pitch.

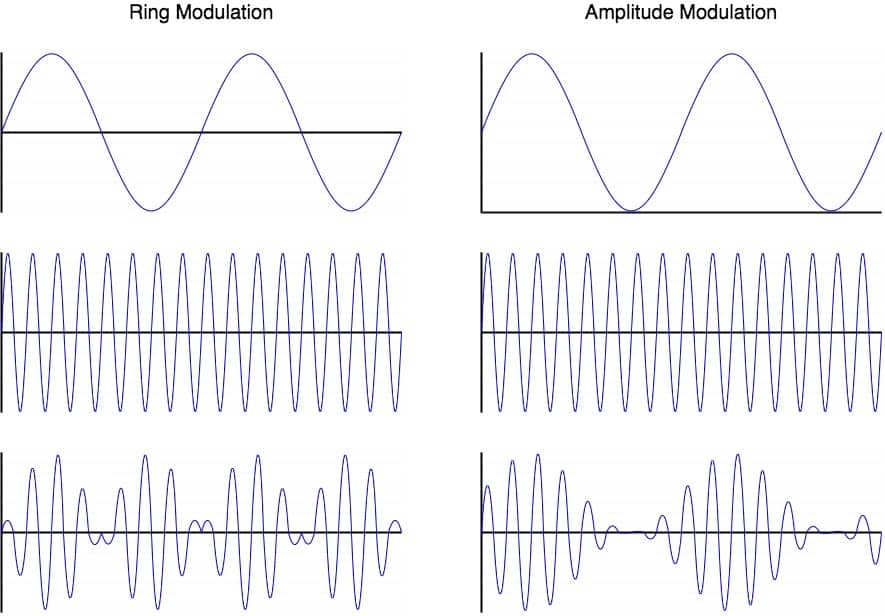

Why is tremolo a modulation effect? Tremolo is a modulation effect because the amplitude of the input signal is modulated via an LFO. Tremolo is a type of amplitude modulation where the carrier signal is a low-frequency oscillator.

I've decided to start with tremolo since it's perhaps the easiest to understand and visualize (at least for me, it is). If we can grasp the tremolo effect first, we'll have an easier time understanding the other modulation effect types.

So, as I've mentioned, tremolo is amplitude modulation at the rate of an LFO. Because of this relatively slow modulation, we are able to hear a distinct modulation in the signal's amplitude. The volume/amplitude of the signal can be perceived as rising and falling according to the LFO modulation.

The tremolo effects will often use an LFO with a frequency between 2 and 12 Hz. The LFO is often a sine wave but can also be other waveforms as well, including triangle and square.

The speed/rate control of a tremolo pedal affects the frequency of the LFO. The amplitude of the LFO will control the amount/depth of the tremolo's attenuation of the guitar/bass/instrument audio signal.

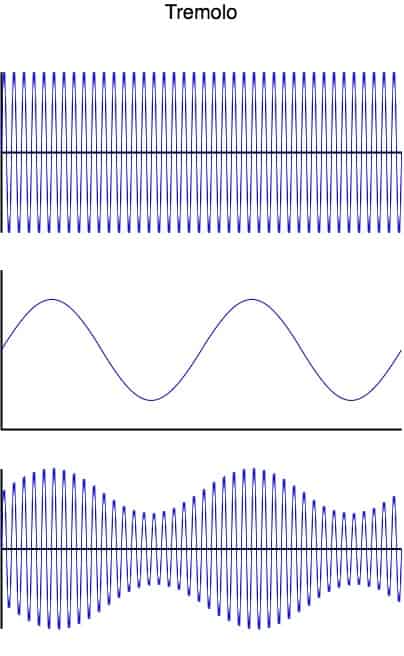

Tremolo can be visualized in the following image, where:

- Top: input signal “modulator”

- Middle: LFO “carrier”

- Bottom: Output

In the image above, we can clearly see the effect of amplitude modulation and modulation more generally. The middle waveform controls/modulates the amplitude of the first waveform to produce the third waveform.

Common tremolo effect controls include:

- Depth/Intensity: adjusts the amount of amplitude variation the tremolo effect will cause.

- Rate/Speed: adjusts the rate of the tremolo effect by changing. the frequency of the LFO.

- Shape: alters the waveform of the LFO.

- Tap Tempo: allows users to tap in a tempo and the LFO frequency to the tempo.

- Ratio: adjusts the rate according to the tapped tempo by way of a rhythmic selection (quarter notes, quarter note triplets, dotted eighth notes, etc.).

- Level: controls the overall output level of the effect unit.

Let's have a look at a few examples of tremolo effects units:

- Notable 500 Series tremolo unit: JHS Kodiak 500

- Notable 19″ rack mount tremolo unit: Rocktron Big Surf

- Notable tremolo effect pedal: Voodoo Lab Tremolo

- Notable tremolo plugin: Soundtoys Tremolator

The tremolo effect can be achieved in other ways besides electrical modulation. Let's look at a few examples:

- Naturally modulating the amplitude of your voice.

- Tremolo picking on stringed instruments (rapid reiteration).

- Riding a volume control up and down.

Related articles:

• What Are Tremolo Guitar Effects Pedals & How Do They Work?

• Top 11 Best Tremolo Pedals For Guitar & Bass

• Top 11 Best Tremolo Modulation Plugins For Your DAW

A note for guitarists: The “tremolo arm” (aka whammy bar) on a guitar is actually designed to provide an effect similar to vibrato (called a glissando, since it's smooth and is not linked to time), not tremolo.

Vibrato

What is vibrato? Vibrato is defined as a fast but slight up-and-down variation in pitch. Vibrato is used in signing and in instruments to add character and improve tone.

Why is vibrato a modulation effect? Vibrato is a pitch modulation effect because the delay time parameter of the delay line is modulated via an LFO. As the delay time is modulated, the audio is time-compressed and time-expanded in real-time, producing a variation in perceived pitch.

Vibrato effects units utilize modulation in an attempt to mimic the natural vibrato of vocals and stringed instruments.

This modulation effect modulates the pitch of the signal.

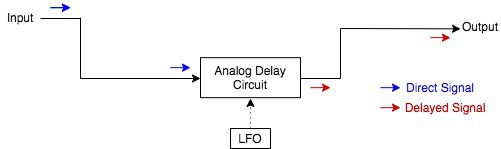

Vibrato, like chorus and flanger (we'll get to those shortly), is technically a modulated delay effect. These effects work by sending the input signal through a delay line and modulating the delay time with an LFO.

With vibrato, the output signal is only from the delay line. A basic vibrato signal path would look like this:

How does modulating the delay time affect pitch? As the delay time is shortened, the waveform is slightly compressed, causing an increase in pitch/frequency. Conversely, as the delay time is lengthened, the waveform is slightly stretched, causing a decrease in pitch/frequency.

The same thing happens with the time-compression and time-expansion of samples. Stretching an audio sample out will lower its pitch, while compressing the time of an audio sample will increase its pitch.

Because an LFO modulates the delay time, we can hear the distinct pitch-altering effect of vibrato. The frequency of the LFO determines the rate of the vibrato effect, and the amplitude of the LFO determines the amount of pitch-shift that will take place.

To reduce any audible lag, the delay time is kept as close to 0 milliseconds as possible and is modulated from there.

Common vibrato effect controls include:

- Depth: controls the amount of pitch variation by adjusting the amplitude of the LFO.

- Speed/Rate: controls the speed of the vibrato effect by adjusting the frequency of the LFO.

- Rise Time/Ramp: controls the initial onset of the vibrato effect once the effect is engaged by adjusting the attack of the LFO envelope.

Let's have a look at a few examples of vibrato effects units:

- Notable 500 Series vibrato unit: JHS Emperor 500

- Notable 19″ rack mount vibrato unit: Matchless TV-1

- Notable vibrato effect pedal: Boss VB-2W Waza Craft

- Notable vibrato plugin: MeldaProduction MVibratoMB

The tremolo effect can be achieved in other ways besides electrical modulation. Let's look at a few examples:

- Naturally modulating the pitch of your voice.

- Bending on stringed instruments.

- Using a pitch-shifter.

Related articles:

• Top 8 Best Vibrato Pedals For Guitar & Bass

• What Are Vibrato Guitar Effects Pedals & How Do They Work?

I'll just reiterate here that vibrato is different than tremolo.

Chorus

What is chorus? Chorus is an effect that produces copies of a signal (the original signal and each of its copies has its own “voice”) and detunes each voice to produce a widening and thickening of the sound. Each voice interacts with the other voices to produce slight modulation and an overall larger-than-life sound.

Why is chorus a modulation effect? Chorus is a modulation effect because the delay time parameter of the delay line is modulated via an LFO. As delay time is modulated, the audio is time-compressed and expanded in real-time, producing a variation in pitch. The delayed signal(s) is then mixed back in with the dry signal.

The chorus effect is named after the use of chorus in music. That is, a group of people singing or playing the same note in unison. Naturally, there will be some slight pitch variation in the voices that make up the chorus. This slight detuning varies across time.

The effect is rich, adding depth and width to the sound.

Chorus modulation is achieved similarly to how vibrato is achieved: by modulating the delay time in a delay circuit. However, with chorus, the dry signal is mixed back in at the output.

So with chorus, we have the dry, unprocessed signal and one or more copies/delays of the signal that are modulated, so their pitch varies over time.

A simplified chorus unit signal path would look like this:

By modulating the delay time with an LFO, we effectively shift the phase of the delayed signal back and forth. This causes pitch variation and also causes some amount of phase-shift-induced filtering in the signal.

Combining the modulated phase-shift signal with the dry signal causes the time-varying detuned effect known as chorus.

The delay time is generally in the range of 18-24 milliseconds. This is too short to be heard as a distinct delay but also long enough so that excessive comb filtering does not occur (that's the realm of flanger effects).

Common chorus effect controls include:

- Speed/Rate: controls the frequency of the LFO and, therefore, the speed at which the pitch-variation happens in the wet signal.

- Depth/Intensity: controls the amplitude of the LFO and, therefore, the range of delay times the delay circuit will oscillate between.

- Mix: adjusts the mix between the dry (unprocessed) signal and the wet (delay-modulated) signal.

- Low-Cut Filter: a high-pass filter control that filters out modulation in the low-end that could potentially cause issues, especially in stereo chorus effects.

Let's have a look at a few examples of chorus effects units:

- Notable 500 Series chorus unit: TB Audio TBDD Stereo Chorus

- Notable 19″ rack mount chorus unit: TC Electronic 1210

- Notable chorus effect pedal: TC Electronic Stereo Chorus +

- Notable chorus Eurorack module: Feedback 106 Chorus

- Notable chorus plugin: Eventide TriceraChorus

Related articles:

• Top 11 Best Chorus Pedals For Guitar & Bass

• Top 11 Best Chorus Modulation Plugins For Your DAW

• What Are Chorus Pedals (Guitar/Bass FX) & How Do They Work?

Flanger

What is flanger? Flanger is a modulation audio effect whereby a signal is duplicated, and the phase of one copy is continuously being shifted. This changing phase causes a sweeping comb filter effect where peaks and notches are produced in the frequency spectrum or the signal's EQ.

Why is flanger a modulation effect? Flanger is a modulation effect because the delay time parameter of the delay line is modulated via an LFO. The delayed signal is fed back into itself and mixed with the dry signal, causing a comb-filtering effect that is swept across the frequency response. This is the flanger effect.

The flanger effect was first heard by playing two identical tapes in parallel and pressing down on the flange of one of the tapes to cause a comb filter sweep across the combined output as one tape fell out of sync (became more and more delayed relative to the other).

This phase-shifting effect is easily distinguishable by its “jet plane swoosh” or “drainpipe” sounding effect.

Like vibrato and chorus, flanger is achieved by modulating the delay time of a delay line with an LFO. By modulating the delay time, the delayed signal is phase-shifted back and forth.

But instead of having the effect of pitch variation or thickening like vibrato and chorus, respectively, the flanger effect is much more of a filter-like effect.

To get the flanger effect, the delay time of the delay line must be relatively short. This will minimize the effect of adding an additional voice to the sound and maximize the phase interactions between the dry and delayed signals.

In general, we don't want to go over 20 ms, but ideally, we want the delay time to be much shorter than that to maintain a tight enough phase-shift and comb filter effect.

To understand flanger, let's quickly have a look at what phase means.

Phase applies to waveforms. In particular, it is the location of a point within a wave cycle of a repetitive waveform. A repetitive wave will go through 360º as it completes one period (one cycle). At this point, we can say the phase is 360º or 0º.

This can be shown with a simple sine wave:

A phase shift of 90 degrees can be achieved by delaying a secondary signal (like what happens with the flanger's delay path):

At 180º, mixing the two waves together will cause them to cancel each other out:

Note that each frequency has its own wavelength and period (the time it takes to complete one cycle). Therefore, a set delay time will cause some frequencies to cancel out while others will get boosted.

What we're left with is a comb filter, which gets its name from its comb-like appearance.

For example, with a 1 ms phase shift between two identical signals, we'd have the resulting comb filter across the frequency spectrum:

Now, if we were to modulate the delay time, we could sweep this comb filter across the frequency spectrum. That is, in essence, the flanger effect.

By allowing the delay path to feedback in on itself, we can further intensify the effect by producing resonant peaks in the comb filter sweep. This can be visualized below:

To end things off with flanger, I'll add a simplified signal path of a typical flanger circuit:

Common flanger effect controls include:

- Speed/Rate: controls the frequency of the LFO and, therefore, the speed at which the pitch-variation happens in the wet signal.

- Depth/Intensity/Width: controls the amplitude of the LFO and, therefore, the range of delay times the delay circuit will oscillate between.

- Delay Time: controls the set point about which the LFO will modulate about.

- Resonance/Feedback: adjusts the amount of the delayed signal that is fed back into the delay line, thereby adjusting the resonance peaks of the resulting comb filter.

- Mix: adjusts the mix between the dry (unprocessed) signal and the wet (delay-modulated) signal.

Let's have a look at a few examples of flanger effects units:

- Notable 500 Series flanger unit: Bel BF-20-500

- Notable 19″ rack mount flanger unit: MXR-126 Flanger Doubler

- Notable flanger effect pedal: Electro-Harmonix Stereo Electric Mistress

- Notable flanger Eurorack module: Doepfer A-188-1 BBD

- Notable flanger plugin: D16 Group Antresol

Related articles:

• Top 11 Best Flanger Pedals For Guitar & Bass

• Top 9 Best Flanger Modulation Plugins For Your DAW

• What Are Flanger Pedals (Guitar/Bass FX) & How Do They Work?

Phaser

What is phaser? Phaser is a modulation audio effect whereby a series of peaks and troughs are produced across the frequency spectrum of the signal's EQ. These peaks and troughs vary over time, typically controlled by an LFO, to create a sweeping effect known as phaser.

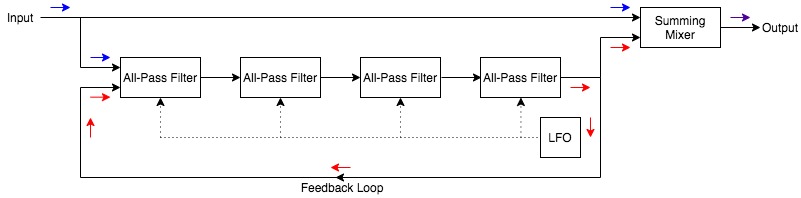

Why is phaser a modulation effect? Phaser is a modulation effect because an LFO modulates the corner frequencies of the cascaded all-pass filters (poles). As the LFO modulates the poles, the notches within the frequency response move up and down, causing the phase-shift sound of phaser.

The phaser effect causes a series of notch filters to sweep across the frequency response, creating a unique effect. It's perhaps the trickiest modulation effect to explain, but I'll do my best to explain it simply.

Rather than modulating a delay circuit, a phaser's LFO is set to modulate a series of all-pass filters. We'll get to that in a second.

The effect the phaser has on a sound is similar to flanger, except the comb filter isn't technically a comb filter. It's actually a series of notch filters that move in unison as the phaser is modulated.

Generally, the phaser's notches are fewer in number and spaced a bit differently. This is why phasers and flangers sound kind of similar but are certainly distinguishable.

Okay, let's get to the part about understanding how phasers work.

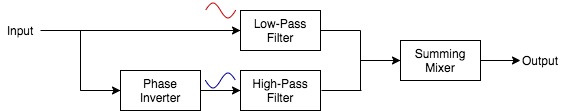

Let's begin with the all-pass filter. This is a filter that passes all frequencies but alters the phase of the frequencies across the frequency spectrum.

An all-pass filter is made of a low-pass filter and a high-pass filter that smoothly crossover at a corner frequency. They are set up so that all frequencies pass and no EQ boosts or cuts take place. However, the high-pass filter acts upon a phase-inverted signal so that the frequencies slowly change phase across the spectrum.

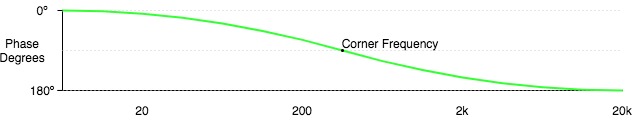

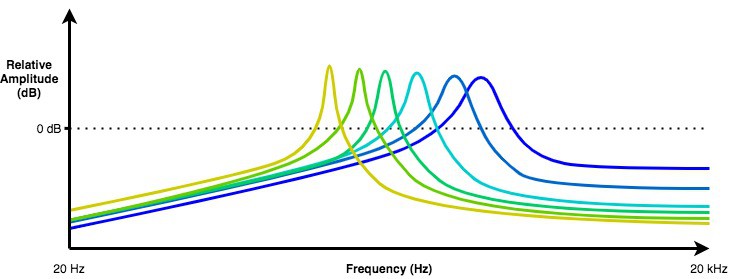

The corner frequency, then, is at 90º. Let's have a look at a few images to help illustrate.

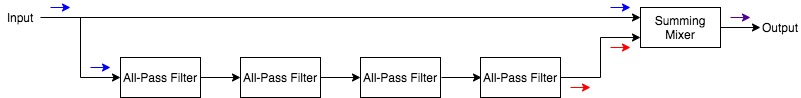

Now let's string a bunch of all-pass filters together in cascade (each one feed into the next):

In the case above, we have 4 all-pass filters (also known as poles).

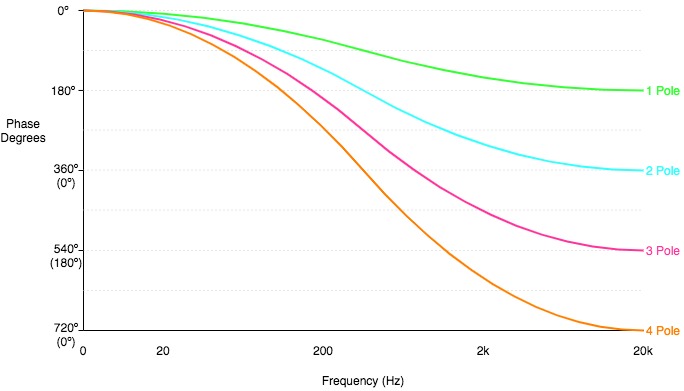

Let's have a look at the phase-shift graph for 1, 2, 3 and 4 pole setups:

Focusing on the 4-pole line, we see that we go 180º (completely out-of-phase) twice. This happens in the low-mid frequencies and again in the mid-ranges.

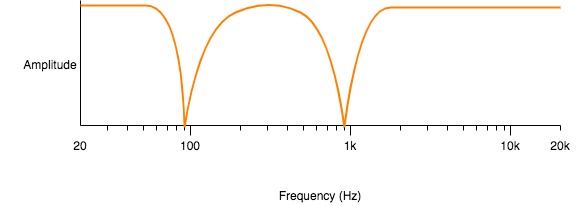

The 4-pole frequency response would look something like this, where the 180º out-of-phase frequencies show up, effectively, as notch filters.:

The more poles, the more notches (at a two-to-one ratio).

If we were to modulate the corner frequency of each stage/pole upward and downward within the audible frequency spectrum, we would sweep these notches and have ourselves a phaser effect!

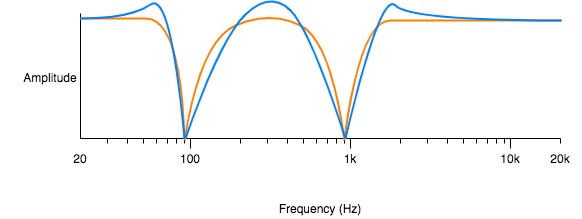

Feeding the final all-pass filter output back into the first all-pass filter input will further intensify the effect by producing resonances:

And there we have, as concise and simple as I can make it. Here's an illustration of a simplified phaser signal path.

Common phaser effect controls include:

- Speed/Rate: controls the frequency of the LFO, which, in turn, controls the speed at which the comb-type filter will sweep across the signal’s EQ.

- Width: controls increase or decrease the amplitude of the LFO and, thereby, increase the range of frequencies the phaser will affect.

- Feedback/Resonance: adjusts the amount of the affected signal that is fed back through the phaser circuit, thereby increasing the resonance of each peak within the comb filter and increasing the intensity of the phaser effect.

- Stages/Poles: changes the number of poles (and therefore notches) in the phaser circuit/effect.

- Mix: adjusts the mix between the dry (unprocessed) signal and the wet (phase-shifted) signal.

Let's have a look at a few examples of phaser effects units:

- Notable 19″ rack mount phaser unit: Roland PH-830

- Notable phaser effect pedal: MXR Phase 90

- Notable phaser Eurorack module: Doepfer A-125 VC Phaser

- Notable phaser plugin: Eventide Instant Phaser Mk II

Doepfer

Doepfer is featured in My New Microphone's Top 11 Best Eurorack Module Synth Brands In The World.

Related articles:

• Top 11 Best Phaser Pedals For Guitar & Bass

• What Are Phaser Pedals (Guitar/Bass FX) & How Do They Work?

• Top 9 Best Phaser Modulation Plugins For Your DAW

Note that uni-vibe is based on the same design principles as phaser.

What is the rotary effect in audio? The rotary effect (aka Leslie effect) was initially produced by the famous Leslie speaker, a unit with a rotating speaker. As the speaker rotates, three separate effects are produced in the [stationary] listener's ears. Those effects are tremolo, the Doppler effect (vibrato) and Phasing.

The rotary effect can be produced by a rotating speaker or with an effects unit. These effects units are essentially phasers.

Let's have a look at a few examples of rotary effects units:

- Notable 19″ rack mount rotary effect unit: Dynacord CLS-222

- Notable rotary effect pedal: Leslie Cream Simulator

- Notable rotary effect plugin: Eventide Rotary Mod

Auto-Pan

What is auto-pan? Auto-pan is a modulation effect that moves the audio signal back and forth between two positional points in the mix panorama and can be done in stereo or multi-channel mixes.

Why is auto-pan a modulation effect? Auto-pan is a modulation effect because the panning of the signal is modulated via an LFO that moves the signal between two points in the stereo (or multi-channel) image.

Common auto-pan effect controls include:

- Width: controls the variation in panning from side to side by altering the amplitude of the LFO.

- Offset: adjusts the centre point about which the auto-pan modulates.

- Rate: adjusts the speed at which the panning changes by altering the frequency of the LFO.

Let's have a look at an example of an auto-pan effects unit:

Notable auto-pan effect plugin: Soundtoys PanMan

Soundtoys

Soundtoys is featured in My New Microphone's Top 11 Best Audio Plugin (VST/AU/AAX) Brands In The World.

Ring Modulation

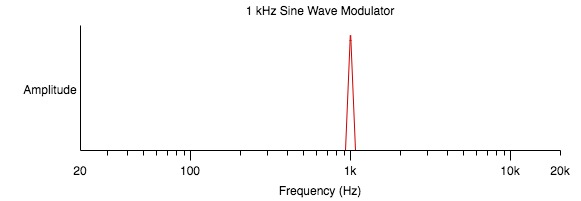

What is the ring modulation effect in audio? Ring modulation is an amplitude modulation effect where two signals (an input/modulator signal and a carrier signal) are summed together to create two brand new frequencies, which are the sum and difference of the input and carrier signals. The carrier is typically a simple wave selected by the effects unit, while the modulator signal is the input signal.

Why is ring modulation a modulation effect? Ring modulation is effectively a type of amplitude modulation where the carrier signal has a fundamental frequency in/near the audible range (20 – 20,000 Hz). The carrier is modulated by the input to produce sidebands at the output, which are the sum and difference of the two signal frequencies.

Ring modulation is another somewhat tricky effect to explain. Let's get into it by first describing the result of the ring modulation effect.

As we'd expect, ring modulation, at its simplest, has a modulator and a carrier signal. These signals are waveforms and have frequency content. The output of the ring modulator is made of the sidebands of the modulator and carrier. That is the sum and difference of their frequencies.

The effect is most easily shown by using two sine waves. Let's say we have a 1 kHz (1,000 Hz) sine wave as the modulator:

Let's say the carrier is a 900 Hz sine wave. In this case, the ring modulator would output two new frequencies (sidebands):

- 1,000 Hz – 900 Hz = 100 Hz

- 1,000 Hz + 900 Hz = 1,900 Hz

However, most audio signals aren't sine waves and are made up of many different frequencies and harmonics. This can get rather complex, and all the sidebands are produced.

When the carrier wave is a sine wave, things remain relatively tame. Each frequency (and even the noise) of the modulator will only be split into two bands.

Things get really wild when the carrier is not a sine wave and, therefore, has harmonics. Each harmonic of the carrier will produce its own 2 sidebands from each frequency in the modulator signal.

Without getting too far down that rabbit hole, allow me to provide another illustrated example.

This time we'll look at a 100 Hz triangle wave modulator signal with only the first 4 harmonics.

Let's use a 50 Hz sine wave carrier signal in this example. The sidebands of the triangle and sine wave would be produced at each harmonic:

- 100 Hz fundamental would become:

- 100 – 50 = 50 Hz

- 100 + 50 = 150 Hz

- 300 Hz first harmonic would become:

- 300 – 50 = 250 Hz

- 300 + 50 = 350 Hz

- 500 Hz second harmonic would become:

- 500 – 50 = 450 Hz

- 500 + 50 = 550 Hz

- 700 Hz third harmonic would become:

- 700 – 50 = 650 Hz

- 700 + 50 = 750 Hz

- 900 Hz fourth harmonic would become:

- 900 – 50 = 850 Hz

- 900 + 50 = 950 Hz

The ring modulator output, in this example, would resemble the following:

Now let's move on to the modulation aspect.

Ring modulation is nearly identical to amplitude modulation (like the tremolo effect we discussed earlier) except for one key difference.

With ring modulation, the resulting product of the modulator and carrier flips phase as the carrier becomes negative. With amplitude modulation, there is no such phase flip. This can be visualized in the following image:

This makes it so that neither the modulator nor the carrier signals are heard at the output. It's only the sidebands. Some ring modulators offer a mix control to mix in the input/modulator signal to the output.

Relating to the tremolo effect, it's important to note that the carrier oscillator is not an LFO. It must have a much higher frequency (in the audible range) to effectively produce audible sidebands.

The actual circuit diagram of the ring modulator is where the effect gets its name since it looks like a ring. I'll add that below:

Common ring modulation effect controls include:

- Carrier Frequency: adjusts the frequency of the carrier signal.

- Wave: alters the waveform of the carrier signal.

- Low-Pass Filter: ring modulation can get rather harsh in the high-end. Some ring modulators have an LPF to deal with this.

- Mix: Mixes the dry signal in with the sidebands (the dry signal is not outputted by default with ring modulation).

- LFO Section: some ring mods have an additional LFO to control other parameters, furthering the modulation aspects of the effect.

Let's have a look at a few examples of ring modulation effects units:

- Notable 500 Series ring modulator: Meris Ottobit

- Notable 19″ rack mount ring modulator: TC Electronic FireworX

- Notable ring modulation effect pedal: Fairfield Circuitry Randy’s Revenge

- Notable ring modulation Eurorack module: Random*Source Ring

- Notable ring modulation plugin: KiloHearts Ring Mod Snapin

Related article:

• Top 8 Best Ring Modulation Pedals For Guitar & Bass

• What Are Ring Modulation Effects Pedals & How Do They Work?

Other Effects That Use Modulation

Alright, so now that we've covered the typical modulation effects, let's talk about the other effects that use modulation.

Again, these are standard effects that will have hardware and/or software dedicated to producing the effect. These effects won't generally show up in “modulation effects” categories, though modulation is required, in one way or another, to achieve the desired results.

The other effects that use modulation are:

Vocoder

What is vocoding in audio? Vocoding is the process of analyzing and synthesizing the human voice (or another modulator signal) for audio transformation. A vocoder splits the modulator signal into frequency bands, and a carrier signal is filtered according to the level of the modulator in each of these frequency bands.

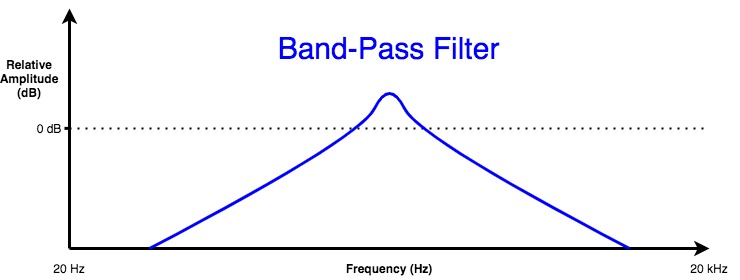

Why is vocoding a modulation effect? The vocoder is a modulation effect for this reason: it analyzes a modulator signal, divides it into frequency bands and applies a band-pass filter to each band. The carrier signal is split into the same bands and filters, which are raised/lowered according to the amplitude of the modulator signal.

The term vocoder is a portmanteau of voice and encoder. Vocoders are a bit of a stand-out in this article since they're better classified as an instrument than an effect. They work by analyzing and synthesizing voice signals to modulate or otherwise control other parameters.

Vocoders have plenty of applications, including, of course, being an electronic musical instrument. So, briefly, how do vocoders work?

In the case of the vocoder, we have two defined inputs. The modulator signal will be the vocal/voice audio, and the carrier signal will be a musical instrument signal (often a keyboard-based synthesizer).

A vocoder works by analyzing the modulator (the vocal/voice) signal. It does so by measuring the amplitudes of the signal within a defined set of frequency bands. These bands are largely defined by band-pass filters, though the first and last band may be defined by a low-pass and high-pass filter, respectively.

A sort of amplitude envelope is generated for each band. Each modulated band's energy (voltage in analog vocoder) is then sent to an identical set of bands/filters that govern the carrier signal. The level at which each of the carrier signal bands is outputted from the vocoder is modulated by the energy/voltage of the corresponding modulator band.

Let's have a look at a vocoder diagram to help with our explanation (note that this is an analog vocoder with voltage-controlled amplifiers, but the general design is universal):

In the above vocoder diagram, we have 10 bands. Each band/filter is doubled to achieve matching pairs of bands.

The modulator signal is sent through each of the 10 bands in parallel. Each band will analyze a specified frequency range of the signal.

The carrier signal is sent through each of its 10 bands in parallel as well.

The amplitude/voltage of each modulator band is used to control the VCA (voltage-controlled amplifier) of each matching carrier band. This means that the amplitude of the modulator bands controls the output level of the carrier bands.

So if the modulator was a single tone/sine wave that fit into only one band, the output would only contain that band's worth of the carrier signal.

By using vocal/voice signals as the modulator and some sort of synth patch as the carrier, we can modulate the synth to take on a characteristic frequency output of a vocal/voice signal while maintaining the character of the patch itself.

Noise generators can be used to help maintain some of the non-harmonic characteristics of the vocal/voice signal (sibilance, plosives, etc.) since noise is more closely related to these speech factors.

There's a lot more to know about vocoders, including the many controls, which we'll discuss shortly. However, this section should give you a solid idea of how vocoders work as modulation-based instruments.

Common vocoder controls include:

- Number of Bands: adjusts the resolution of the modulator by changing the number of frequency bands into which the modulator is split.

- Frequency Range: adjusts the limits of the carrier signal similar to a band-pass filter.

- Bandwidth: adjusts the width of each band and filter.

- Formant: shifts the frequencies (up or down) that are covered by the filters.

- Depth: the amount by which the modulator will affect the carrier.

- Unvoiced: a setting that allows sibilance, plosives and other non-harmonic voice content to more effectively modulate the carrier by adding noise to the carrier, allowing for a more intelligible vocoder effect.

- Mix: mixes the dry (unprocessed) and wet (vocoder output) together at the output.

Let's have a look at a few examples of vocoders:

- Notable vocoder: Korg microKorg

- Notable 19″ rack mount vocoder unit: Roland SVC-350

- Notable vocoder effect pedal: Boss VO-1

- Notable vocoder Eurorack module: L-1 Vocoder

- Notable vocoder plugin: Image-Line Vocodex

Korg

Korg is featured in My New Microphone's Top 11 Best Synthesizer Brands In The World.

Wah-Wah

What is the audio wah effect? Wah (or Wah-Wah) is a filtering effect that is common on guitars and keyboard instruments. Wah is achieved by sweeping one or more boosts in EQ up and down in frequency, thereby mimicking the human vowel sound of “wah”.

Why is wah-wah a modulation effect? Wah-wah is a modulation effect because the user modulates the formant-like EQ boosts, typically with the treadle-type pedal.

Wah effects aim to achieve the same spectral glide as the human voice saying “wah” forward and backward. The modulation of the EQ peaks caused by the effect is designed to resemble the movement of formants in the natural response of the human voice.

Formants are distinctive frequencies that help define a vowel (or consonant) sound. They are particular sensitivities (increases in amplitude) at certain frequency bands. Each vowel will have its own formants, which have a bit more energy than the other frequencies in the sound wave.

Here is a graph that plots the vowel sounds according to their first and second formants.

The wah effect mimics the sound “wah-wah” by modulating a resonant peak across the frequency response of the effect's output. The modulation is controlled by a variable resistor (potentiometer) or another type of expression control. The actual filter of a swept wah effect could look something like this:

The image above shows that the resonant peak is modulated from one extreme (represented by the yellow line) to another (represented by the blue line).

Common wah effect unit controls include:

- Expression Controller: the modulation control, be it a mod wheel, expression pedal, aftertouch, etc.

- Q Controls: adjusts the width of the resonant peak.

- Range: adjusts the frequency range in which the peak will be modulated between.

- EQ (Bass, Mids, Treble): an addition tone control.

- Level: the overall output of the effect unit.

Let's have a look at a few examples of wah-wah effects units:

- Notable 19″ rack mount wah unit: Dunlop Cry Baby DCR1SR

- Notable wah effect pedal: Dunlop Cry Baby GCB-95

- Notable wah Eurorack module: D&D Modules Lord Of The Wah

- Notable wah plugin: Native Instruments Guitar Rig (as an effect)

Related articles:

• Top 14 Best Wah Pedals For Guitar & Bass

• What Are Wah-Wah Guitar Effects Pedals & How Do They Work?

Auto-Wah/Envelope Filter

What is auto-wah or envelope filtering? Auto-wah/envelope filtering is an effect in which the filter of the signal is modulated by the envelope/transients of a signal. These filters, therefore, act according to the dynamic rise and fall of a signal and are most often used on bass, guitar and synthesizer instruments.

Why is auto-wah/envelope filtering a modulation effect? Auto-wah/enveloping filtering is a modulation effect because the filter is modulated/controlled by the envelope of the signal.

As the name “envelope filter” would suggest, this modulation effect uses the envelope of the input signal's amplitude to control a filter cutoff frequency the effectively filters the output signal.

This style of modulated filtering is similar to the wah-wah effect mentioned above, except that the modulation is caused automatically by the inherent envelope of the input rather than via an expression pedal, hence the name

“auto-wah”.

An envelope filter effect unit will detect the amplitude of the input signal and generate an appropriate envelope. We can visualize this with the following image, where the black line represents the audio signal, and the red line represents the detected envelope:

This envelope will modulate the filter of the effect unit. A low-pass envelope filter will look something like the following (notice how the minimum and maximum envelope markers match up):

The filter will generally have a resonance peak at the cutoff frequency to achieve the wah-like spectral glide, as depicted above.

Envelope filters can utilize band-pass, high-pass or low-pass filters, which can be swept upward or downward for various effects.

Common envelope filter controls include:

- Filter Type: low-pass, band-pass or high-pass.

- Response/Attack: adjusts the attack time of the generated envelope.

- Speed/Decay: adjusts the decay time of the generated envelope.

- Sensitivity: adjusts the amount of amplitude needed to achieve the same amount of filtering.

- Range: adjusts the range of the filter movement across the envelope.

- Q/Peak: adjusts the resonance peak at the cutoff frequency.

- Sweep Direction (Up/Down): changes the direction of the sweep.

- Mix: mixes the dry (unfiltered) and wet (envelope filtered) signals together at the output.

Let's have a look at a few examples of envelope filter effects units:

- Notable 500 Series envelope filter unit: Moog The Ladder Envelope Filter

- Notable 19″ rack mount envelope filter unit: Mutronics Mutator

- Notable envelope filter effect pedal: Mu-Tron Micro-Tron IV

- Notable envelope filter plugin: Kuassa Efektor WF3607

Related articles:

• Top 13 Best Envelope Filter Pedals For Guitar & Bass

• What Are Envelope Filter Effects Pedals & How Do They Work?

Octavers

What is the octaver effect? The octaver/octave effect is an audio effect that effectively adds one or more octaves (below or above) to the signal.

Why is the octave effect a modulation effect? Some octave effect effects utilize amplitude modulation with generated square wave carrier signal(s) that are octave(s) from the input fundamental. These carriers then affect copies of the input signal to raise or drop the signal by one or more octaves, resulting in a less “synthy” sound.

Note that simple monophonic octave generation can be achieved with modulation, though pitch-shifting and harmonization effects cannot. These effects require sampling and DSP to work efficiently in real-time. Today, the vast majority of octave effects also use more accurate DSP technology.

Let's have a look at an example of an octave effects unit:

- Notable modulation-style octaver effect pedal: Boss OC-5

Boss

Boss is also featured in My New Microphone's Top 11 Best Guitar/Bass Effects Pedal Brands To Know & Use.

However, if you're interested in learning about pitch-shift and harmonization effects, check out my articles:

• Top 9 Pitch-Shifting & Harmonizer Pedals For Guitar & Bass

• What Are Pitch-Shifting Guitar Pedals & How Do They Work?

Other Uses Of Modulation In Audio

Though this article isn't necessarily about modulation in general, I thought it would be nice to finish with a few more examples of modulation in the context of audio.

We've discussed the effect. Now let's briefly mention a few other ways in which modulation is used.

Voice modulation refers to any variation in the strength, tone, or pitch of someone's voice. We can see how similar this natural effect is to many of the audio effects discussed above.

To modulate, in music theory, is to change keys within a piece of music.

Though getting into each and every possible modulation scheme for the wireless transfer of audio would take a while, I should mention some of the main ways in which modulation can be used for wireless audio (note that there are different modulation types within the following styles of wireless transmission):

- AM (Amplitude Modulation) Radio

- FM (Frequency Modulation) Radio

- Wireless Audio Transmission Via Radio Waves

- Bluetooth Wireless Audio Transmission

Related articles:

• How Do Wireless Headphones Work? + Bluetooth & True Wireless

• How Do Wireless Microphones Work?

• How To Connect A Wireless Microphone To A Computer (+ Bluetooth Mics)

As we've briefly discussed, synthesizer instruments utilize modulation in all sorts of ways to shape the output audio signal. Here are a few ways in which modulation is used in synths:

- FM (Frequency Modulation) Synthesis

- Synthesizer Modulation Controls

- LFOs

- Envelope Generators

- Modulation Wheels

- Aftertouch

- Step Sequencers

- Knobs/Faders

- Expression Pedals

A Special Note On FM Synthesis

I figured I couldn't discuss vocoders without discussing FM synthesis since they're both instruments. Let's end this article by briefly discussing FM synthesis.

What is FM synthesis? Frequency modulation synthesis is a type of sound/audio synthesis that utilizes modulating oscillators to modulate the frequency of the audio/carrier waveform. Many complex harmonic and inharmonic sounds are possible with this type of synthesis.

Isn't frequency modulation synthesis just vibrato, then? Absolutely not!

Remember that the vibrato effect actually modulates the delay time in a delay signal. It achieves its pitch variation by compressing and expanding the period of the waveform.

FM synthesis uses its modulator signals to alter the frequency of the carrier itself, making it a completely different type of modulation.

That being said, we can use the pitch-varying effect to help us understand the nature of FM synthesis.

The first thing to note is that vibrato uses an LFO modulator. The variations in pitch, then, are easily perceived.

If the modulator oscillator in an FM synth had a low enough frequency (in the LFO range), we would also hear a variation in the pitch of the synth output.

But what happens as we increase the modulator frequency? The modulation of pitch begins to quicken until it becomes unnoticeable. Around the 20 Hz cutoff point, we begin hearing an increasing modulator frequency as a change in timbre rather than a quickening of pitch variation.

The higher the modulation frequency, the more complex the synthesized output waveform.

So by modulating the frequency of a waveform with an oscillator in the “audible frequency range”, we actually alter the tonality of the waveform and, thereby, synthesize new audio signals and sounds.

This is, of course, an oversimplification, but it's a good start to understanding the basics of FM synthesis.

Choosing the right effects pedals for your applications and budget can be a challenging task. For this reason, I've created My New Microphone's Comprehensive Effects Pedal Buyer's Guide. Check it out for help in determining your next pedal/stompbox purchase.

Choosing the best audio plugins for your DAW can be a challenging task. For this reason, I've created My New Microphone's Comprehensive Audio Plugins Buyer's Guide. Check it out for help in determining your next audio plugin purchases.

Building out your 500 Series system can be a challenging task. For this reason, I've created My New Microphone's Comprehensive 500 Series Buyer's Guide. Check it out for help in determining your next 500 Series purchases.

Building your Eurorack system can be overwhelming. For this reason, I've created My New Microphone's Comprehensive Eurorack Buyer's Guide. Check it out for help in determining your next Eurorack purchases.

Leave A Comment!

Have any thoughts, questions or concerns? I invite you to add them to the comment section at the bottom of the page! I'd love to hear your insights and inquiries and will do my best to add to the conversation. Thanks!

This article has been approved in accordance with the My New Microphone Editorial Policy.